Getting point cloud data in and out of the cloud

Point clouds store highly detailed information about physical space. This produces large files that can become unwieldy, particularly as projects grow in size. Large projects can include hundreds (or even thousands) of scans, sometimes creating datasets in excess of a terabyte. This puts a strain on hardware capabilities and massively extends already lengthy processing periods. Learning how to deal with large point cloud datasets is one of the largest problems that creators of point clouds face.

Intuitively, cloud computing makes sense as a solution to this problem — it enables organisations to rent rather than buy expensive hardware, deploy solutions faster, reduce capital investments and focus on core competencies. While the benefits are real, there are risks as well.

In this article, we explain how planning for flexibility can help de-risk your move to the cloud. We will use Amazon Web Services (AWS) as an example, but one of the added complications of evaluating cloud services is comparing the offerings not just from AWS, but Microsoft Azure, Google Cloud and IBM.

When and why you will use the cloud for point clouds

The benefits that the cloud brings to point cloud processing are very similar to the reasons for its popularity across the business world — flexibility, collaboration and on-demand power. However, to understand how this specifically impacts surveyors, point cloud creation and getting point cloud data in and out of the cloud, it is worth recapping these benefits.

1. Scalability and on-demand processing

On-demand scalability is a significant draw for most applications of cloud computing. Users can scale-up or scale-down their IT requirements as and when required — only paying for what they need without risking capability bottlenecks when taking on large projects or growing their business.

Cloud services have both the processing memory (RAM) and disk storage to handle very large amounts of data. Terabytes of RAM and petabytes of storage are available to handle any project when it arises. Surveyors do not need to preemptively invest in IT resources that they may not ever use, or may not use for long periods of time.

A public cloud environment is a pool of resources to be drawn on as and when needed. The ideal is to create a pool of the right size with cloud resources being used almost constantly. Managing the cost of cloud resources can be a science in itself, so care has to be taken to ensure cost and efficiency are in balance all the time. But it is a great flexible asset when approached right.

2. Access to near infinite speed

On-demand scalability allows users to scale up to really any level of power. For large data sets, storage size is often the first scalable resource that comes to mind. But processing power can also scale in the cloud.

As we will get to, there are bandwidth issues with accessing the compute resources offered in the cloud. However, when it comes to point clouds specifically, scaling your access to virtual processing cores and threads can deliver huge benefits that are hard to duplicate with standard servers.

Some types of point cloud processing software are able to take advantage of multiple processing cores (and multithreaded processors) to register multiple scans simultaneously. The best commercial processors, like the i9-7980EX, have 18 cores. With hyperthreading, that delivers 36 threads, or the ability to undertake the coarse registration of 36 scans simultaneously. That is a lot. However, with the cloud, there is really no limit to the number of threads you can access.

What the cloud offers is the theoretical potential to register a near infinite number of scans simultaneously. The practical limits of that will hinge on your bandwidth access and processing software. However, it is an exciting possibility for surveyors regularly dealing with projects ranging into the thousands of scans — even if it practically only means a jump from registering a few dozen scans simultaneously to a few hundred.

3. Collaboration

Cloud services enhance communication during a project, with surveyors even able to upload data in the field (given a fast mobile data connection) for immediate registration using cloud servers. The cloud enables a secure way to communicate with rapid response. You don’t have to be in the office — you are not constrained to one or several machines.

For multiple offices and remote teams, collaboration becomes easier with data held in a cloud service. There is no need to send files back and forth, and make copies of copies in order to share information with the best person for the job.

With the cloud, everyone can access the same data from the same place, make changes in real time and collaborate using the same documents. Improved team collaboration is another reason businesses of all kinds want access to the cloud, and point cloud creation is no different.

Challenges of using the cloud for point clouds

Along with security and cost, data handling is consistently identified as a top barrier to using cloud services. Data requirements vary by application, datasets are often large and accessing data across even the fastest WAN connection is orders of magnitude slower than accessing it locally. Many of these issues are interrelated. Having a clear view of the trade-off between the risks and benefits is crucial, especially when costs can get out of control.

Bandwidth — in field and in office

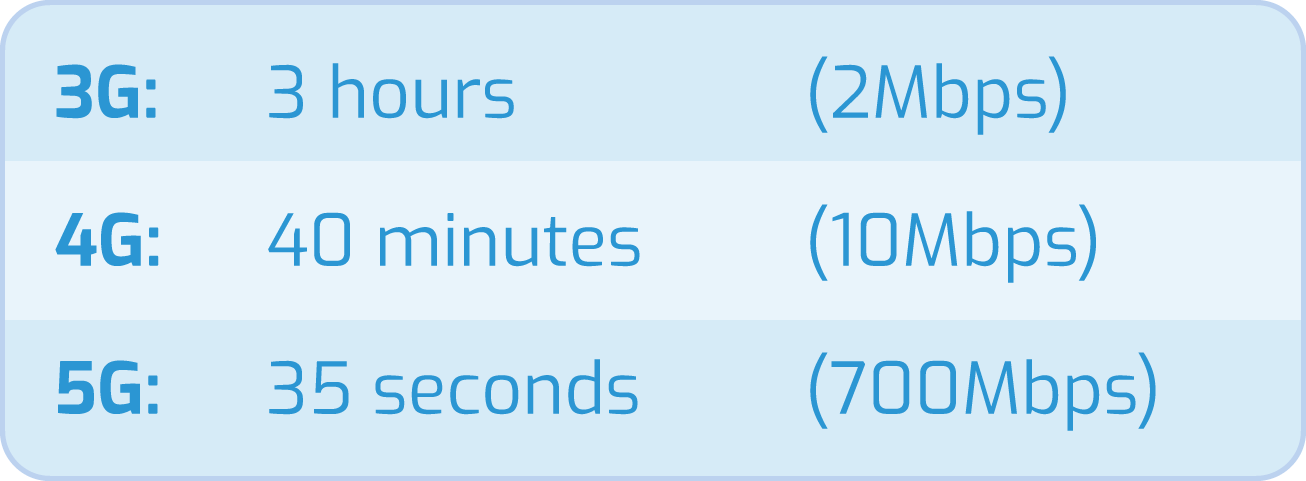

We’ve already highlighted the immense opportunity in uploading data straight from site to a cloud server — this will become an everyday occurrence with the introduction of 5G.

Without examples, it can be difficult to visualise what you can do on a 5G network vs a 4G network, or any other slower connection. Consider this: You upload a point cloud file that's 3GB in size. Here's how long it might take to upload on those different kinds of mobile networks (using realistic speeds, not peak speeds):

So, keeping an eye on the roll-out of 5G data services is definitely advised.

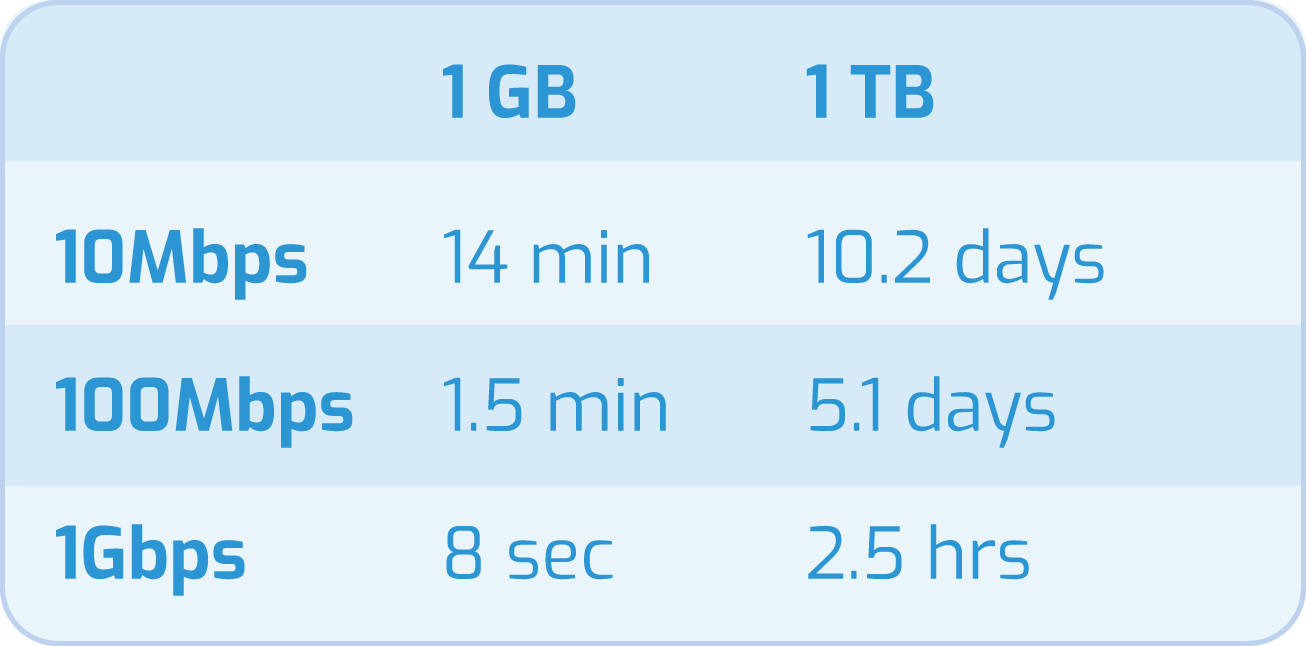

In the office, there are other challenges. The time it takes to transfer data to the cloud depends on the size of the organisation's Internet connection, and, of course, the data set. So how long would it take to upload data?

Fortunately 1Gbps services are reducing in price — but 100Mbps should be considered as the entry point for cloud connectivity from a company location.

Connectivity problems

Having a fast, reliable connection to the cloud is essential to running point cloud processing environments where data has to travel between on-premise environments and cloud with low latency and high reliability. Again, look to implementing resilient services with automatic fail-over between connections.

Cost problems

Analyst firm Gartner states that it is not unusual for public cloud bills to be 2x to 3x higher than expected. Gartner anticipating that, “Through 2020, 80% of organisations will overshoot their cloud budgets due to lack of cost optimisation approaches.” These approaches need to be underpinned with policies on servers and server types, tiers of storage and storage lifecycle (e.g. moving old files to cheaper storage), and network utilisation and security.

The main reason for these discrepancies in price points are the different ways in which cloud plans are charged. Fees can be different for uploads into the cloud, processing undertaken in the cloud, and downloading those files. Ultimately, you need to investigate those specifics on a plan by plan basis, and make the best choice for your specific known use cases.

How to use the cloud for point cloud

Overcoming the challenges of accessing the cloud simply requires thinking about your provider and your requirements. The cloud will still deliver flexibility, but there may be investments that you need to make before the cloud will work for you. You also need to make sure that you sign up for the right cloud access plan.

Investigate SLAs

In today’s world, every customer expects services without disruption. Especially when transitioning from operating on-premises to now becoming customers of cloud-based solutions. Even the largest cloud companies in the world have outages every year that affect their uptime commitments.

Analyse your system and calculate the availability uptime required. This will help you understand which commitments you can make to your business. However, that availability can be highly affected by your own ability to react to failures and recover the system.

Along with price points, access guarantees are something that will be spelt out in your service level agreement (SLA). Make sure to read that agreement and get the outcomes that you need.

Invest in connectivity

Some specialised cloud providers offer data upload services, and it is possible to speed up data transfers by sending files in multiple streams and by using compression. And of course, some organisations have their data collocated at or near the cloud provider's site. However, for everyone else, it is imperative to think about the time it will take to get data to (and from) the cloud before jumping in.

Make sure that your internet connectivity is up to the task, and shift your investment focus to WAN connectivity, rather than on-premise hardware — although you will still need a good computer to handle CAD and point cloud files.

AWS, like the other major cloud providers, offers a Direct Connect service to establish a dedicated network connection between you and AWS. In many cases, this can reduce network costs, increase bandwidth throughput and provide a more consistent network experience than Internet-based connections.

As an example, it would cost around £500/month for a 1G dedicated connection to a cloud service plus the cloud service provider cost of around £1000/month — but do think about resilience and fail-over of network connectivity.

Manage your data

Although part of the draw of the cloud is the ability to flexibly accommodate unforeseen use cases, it is important to plan the management of your data in order to get the best outcomes from the cloud. This comes down to five main points:

- Ballpark all your costs in advance: Before going too far down the road of detailed planning, it’s useful to develop a “back of the envelope” monthly cost model. Consider factors such as data volumes, transfer times, how long data will reside in file systems vs. object stores, etc.

- Move the processing to the data: To state the obvious, the most efficient way to solve data handling challenges is to avoid them by moving the compute task to the data if you can.

- Use replication with care: Just because you can replicate your file system to the cloud doesn’t mean you should. Replicate data selectively and move only the data needed by each workload.

- Minimise costs with data tiering: Remember that different cloud storage tiers can have dramatically different costs. Rather than leaving persistent data on expensive cloud file systems, leave data in a lower-cost object store when not in use.

- Treat storage as an elastic resource: Employ automation to provision file systems only when dynamic cloud instances need them, and shutdown them down after use.

If you can do all of that, you will stand a good chance of using the cloud where it is best suited, avoiding pitfalls of relying too heavily on the cloud, and improve your point cloud processing capabilities.

Make sure you have the right software that can take advantage of what the cloud has to offer

New entrants into the point cloud software market often focus on a single aspect of point cloud creation, delivering improvements to either the processing or modelling. This lends itself to cloud implementation. For example, several new processing programs are taking advantage of novel vector techniques to accelerate the registration processing procedure by as much as 40%-80%. The speed and efficiency are particularly valuable in the cloud, reducing total compute and user time, and therefore costs, saving money.

Look for software that automates manual processes and frontloads what remains. Cross-checking scans, changing parameters for every pairing and generally having to be involved throughout the processing period exponentially increases the time costs of handling large datasets. The ability to build entire scan trees, walk away and deal with verification after the dataset has been processed greatly improves efficiency. This is also essential to take full advantage of simultaneous point cloud processing offered by the cloud.

Look into the review processes available within programs. Ideally, you want options. You can either check the accuracy of data using visualisation features or statistical data. The quality of such tools to review large quantities of data directly impacts your ability to trust the final output.

Pay particular attention to the interface for scan pairing (building a scan tree). For large projects, this can be a challenging aspect of the processing procedure. A system with a smooth interface that allows for the rapid alignment of scans will significantly reduce the effort and time it takes to handle a large dataset.

In addition to the critical ability to use multiple threads to process several scans simultaneously, there are three other software features that you should look out for to speed up processing: the ability to integrate with existing cloud storage, support major file formats and provide statistical reporting for confidence in the result.

A manageable challenge

Getting large datasets to and from the cloud is a challenge, but these challenges can be overcome with planning and research. When done right, the cloud actually offers a great solution to handling and processing large data sets.

Start by thinking about the level of precision required in the finished point cloud, along with the number of scans your survey will produce. Program capabilities will differ, and it is important to make sure that you have the options to reduce the size of datasets and then efficiently process them. Specifically, look out for software that can take advantage of multi-thread, simultaneous processing throughout the entire processing procedure.

By looking at cloud options for processing the largest dataset, all you really need to worry about is having enough storage to keep the output and necessary bandwidth to access your cloud solution.

Processing large datasets in the cloud isn’t fundamentally that different from processing smaller ones. Just plan ahead and give yourself enough time to evaluate the cloud services (low-cost piloting and trials on cloud services are your friends here) and ensure you have processes in place for handling and managing these files. The cloud offers great opportunities for point cloud processing — use them wisely.

Tags: point clouds