How to Automate LiDAR Data Classification

LiDAR applications have rapidly expanded across industries. In part, this has been enabled by advances in registration algorithms that have simplified the creation of reality capture datasets — check out our ebook “Point Cloud Processing Has Changed” if you want to learn more. However, the inclusion of LiDAR capabilities on the new iPhone is a good case-and-point for how widespread this technology/methodology has become.

While point cloud data contains vast amounts of spatial detail, that data isn't yet being used to its fullest. There is still a gap between generating point clouds and extracting data from them — a critical step for applying this data in real-world applications. The ability to drill down to individual components will create better and more detailed 3D models for use in all kinds of scenarios. This is what LiDAR data classification is all about — and automating that classification process is the key to widespread adoption. Let’s explain.

What is LiDAR data classification?

Data classification is the critical process of assigning values and meaning to data points within a point cloud. It's fundamentally the assignment of objects (and object characteristics) within point cloud data sets.

Why is data classification so important? Let's look at some examples:

- In construction: You could detect building components automatically from your scan and help track progress, assess material needs, and detect safety risks.

- In planning: You could create detailed urban inventories to develop and maintain smart city and digital twin models.

- In environmental science: You could monitor large scale geological trends and drill down even to tree species detection and density estimates.

All this can be made possible when clusters of LiDAR points have a classification assigned to them that defines the object type. Most commonly, you can classify LiDAR scans into several categories such as bare earth or ground, top of canopy, and water.

The American Society for Photogrammetry and Remote Sensing (ASPRS) has defined classification codes for LAS formats. The different classes are defined using numeric integer codes in the LAS files. Indoor scans are usually manually classified and are typically specific to the location scanned. This is a valuable set of standards, but manual processes are simply too time-consuming to be deployed with real impact.

Manual vs automated LiDAR data classification

Point clouds require analysis to obtain information about contained objects and spatial properties. However, point clouds are challenging to classify automatically. To better understand "classification", it may help to think of it as labelling or annotation. It’s a question of who does the labelling, how fast they can do it, and how accurate it is.

There are four different ways that LiDAR data classification can occur, and the process of automating data classification is currently progressing through these multiple stages. Realistically, the best technology on the market sits somewhere within the third stage of the journey towards automation.

Stage 1: Fully manual

Traditionally, data classification has been carried out manually — assessing point clouds and assigning point clusters with classification categories. You are manually working with point clouds and carrying out tasks repeatedly. No matter how often you mark similar objects like a road, you will always have to do it again. That means you don't get any chance to automate repeated tasks or generate training data for AI/machine learning.

Stage 2: Cross-checked teaching

As we move towards the application of AI/ML, algorithms can deliver a first-pass at classification, which surveyors then manually check for accuracy. The algorithm removes the need for cross-checks during processing and limits the need to set too many classification parameters. Just as importantly, this type of automated classification deliberately uses manual inputs to improve an AL/ML algorithm, helping teach the software how to upgrade outcomes. However, there are still a lot of manual tasks within cross-checked processes.

Stage 3: Automated verification

As classification algorithms improve, not every classification assignment needs cross-check verification. Just like standard object detection software, probability assignment can be made for each classification, and parameters set to generate cross-check reviews based on the level of certainty — for example, anything under 80% accuracy. Your ability to set different levels of certainty enables users to trade speed for accuracy depending on the nature of the project.

Automated verification systems are flexible and fast. Realistically, the amount of manual cross-checking required will vary by project specification and the complexity (or novelty) of the data being analysed. However, like with “cross-checked teaching”, automated verification uses human input to further refine and train models — helping reduce long-term manual requirements for effective LiDAR data classification.

Stage 4: Fully automated

The goal of training data classification algorithms is a fully automated system. Realistically, this is simply an improved version of automated verification that is good enough to confidently assign classifications without need for verification. However, the quality assurance of confidence assessments (and the possibility of manual review) would remain in any quality automated LiDAR classification software.

The Vercator approach to LiDAR classification

At Vercator, we've pioneered automation within point cloud processing and are applying the same technology to improve classification. Vector-based and multi-stage registration algorithms deliver a more robust registration that simplifies classification by improving the quality of analysis.

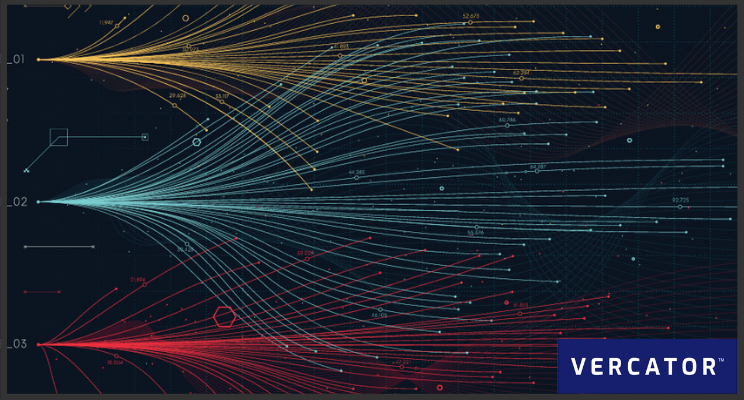

We have developed these algorithms to simplify and accelerate the combination of multiple data sets, for example, combining mobile scan data outputs with traditional point cloud data sets. The three defining components of our approach are:

- Vector-based processing: Extrapolating directional vectors with a normalised unit length of one from each of those points makes it possible to collapse an entire scan into a "vector sphere" while still retaining its unique and identifying characteristics. This increases the number of available options for classification review, along with simplifying registration of multiple scans.

- Multi-stage processing: Vector spheres enable registration in three stages. Placing spheres within spheres allows for independent rotational alignment. Once aligned on one axis, rapid point density analysis can be deployed to achieve horizontal and vertical alignment at far greater speed without reducing quality.

- Cloud-ready processing: Vercator takes advantage of scalable computing power available in the cloud to process multiple scans simultaneously and rapidly accelerate processing time — a technique that can also be applied to classification in order to accelerate analysis.

The primary outcome is that Vercator innovation delivers a more powerful and flexible use of scan data. Even if you have a standard point cloud scan of an area, you can layer data sets, using millions of reference points to align that data. Integrating scan data with other data sets feeds the AI-based recognition of objects and makes classification outputs much more accurate.

Suggested reading: check out our ebook — Point Cloud Processing Has Changed — if you want to learn more.

Enabling outcomes with the right software

So, can surveyors trust automated point cloud classification?

The answer at this stage of its evolution is "trust but verify". Good automation software will help you understand certainty levels and direct users to cross-check specific issues. The technology is still developing. Although there may be more “hype” than “reality” at this point, we aren’t far off from highly automated classification workflows — and there are already efficiency gains to be made by investing in the right software.

Choosing the right software is critical to outcomes you can trust. Any technology's success comes back to outcomes, and data classification (and automated classification) will improve results. By automating, you increase efficiency and bring down costs, enabling more opportunities and innovation. Watch this space, we think there will be significant improvements throughout 2021.

Tags: data set, LiDAR, automation, Vercator, segmentation, classification